The pursuit of artificial general intelligence (AGI) has largely been a race of logic. We have trained models to write complex code, solve advanced calculus, and pass graduate-level STEM exams. Yet, human intelligence is not merely a collection of logical deductions. It is deeply rooted in context, nuance, and culture.

To build models that truly understand the human condition—and by extension, the complex, unstructured realities of the enterprise world—they must be able to read between the lines. Currently, Vision-Language Models (VLMs) look at the world like tourists: they can identify a clock, a flower, or a brushstroke, but they completely miss the philosophy, history, and symbolism behind them.

Today, we are thrilled to introduce VULCA-BENCH, a multicultural art-critique benchmark designed to evaluate Vision-Language Models' cultural understanding beyond surface-level visual perception. Built in collaboration with researchers from the Duncan of Jordanstone College of Art & Design (DJCAD) at the University of Dundee, VULCA-BENCH is not just an evaluation of art—it is a stress test for the highest levels of humanistic reasoning in AI.

Read the paper → · GitHub · HuggingFace Dataset

The Turing Test for Cultural Context

Art is arguably the most contextually dense data humans produce. Existing VLM benchmarks predominantly measure L1-L2 capabilities, such as object recognition, scene description, and factual question answering. They fail to measure whether a model can interpret symbolic meanings, appreciate aesthetic traditions, or engage with philosophical concepts embedded in visual content.

Consider a traditional Chinese ink painting of plum blossoms. A frontier VLM can easily identify the "plum blossoms" and the "ink wash technique". But can it grasp deeper aesthetic principles like qiyun shengdong ("spirit resonance") or yijing ("artistic conception") that define Chinese painting philosophy?

To measure this, VULCA-BENCH operationalises cultural understanding using a five-layer framework:

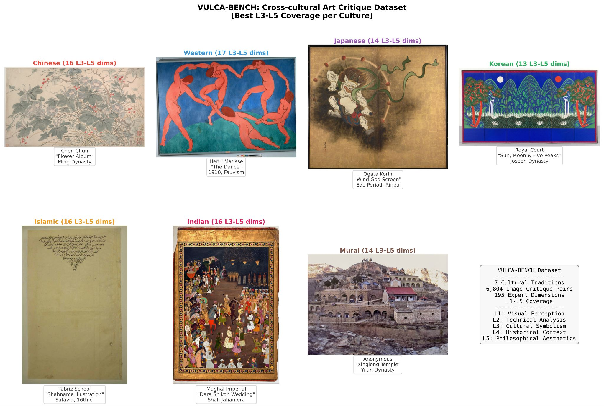

The dataset contains 7,408 matched image-critique pairs spanning eight distinct cultural traditions, supported by 225 expert-defined, culture-specific dimensions. The corpus is distributed as follows:

A cross-cultural case gallery illustrating the benchmark's breadth across these traditions is shown below:

Results: Where Frontier Models Hit a Wall

The results expose a systemic blind spot in today's leading architectures. While frontier models excel at basic visual and technical analysis, they collapse when asked to reason critically about culture.

We measure this using an overall Dimension Coverage Rate (DCR)—the percentage of culture-specific expert dimensions successfully captured by the model's generated critique—and ΔL (Layer-Gap), which represents the "cultural depth deficit," or the performance drop between basic perception and deep interpretation.

Our pilot evaluations show that frontier models experience a massive 31 to 40 percentage-point drop (ΔL) from visual perception (L1-L2) to cultural interpretation (L3-L5). When forced to navigate these higher layers, models often resort to using "surface-level terminology" without understanding the visual manifestations, or they commit "historical anachronisms" by conflating distinct cultural eras.

They lack the deep, native cultural knowledge required for true comprehension—knowledge that cannot be acquired simply by scraping the open web.

Powering the Self-Evolving AI

At Analogy AI, we view the abstraction and complexity of cultural interpretation not as a niche academic challenge, but as the ultimate test for data infrastructure. If an automated pipeline can source, verify, and structure this level of expert human reasoning, it can solve the data bottleneck for any complex domain.

We are not just evaluating where frontier models fail today; we are building the continuous, high-quality data engine required to push them forward. By closing the loop—where better data feeds model improvement, and smarter models help curate even better data—we are building the infrastructure for AI that never stops evolving.